Posts

Showing posts from 2014

Autohotkey script to generate a BIRT report from Eclipse Report Design perspective

- Get link

- X

- Other Apps

Script to pump output into a text file and open it in an editor

- Get link

- X

- Other Apps

Add Eclipse Project to Local and Remote Git Repository

- Get link

- X

- Other Apps

The installer is unable to instantiate the file KEY_XE.reg

- Get link

- X

- Other Apps

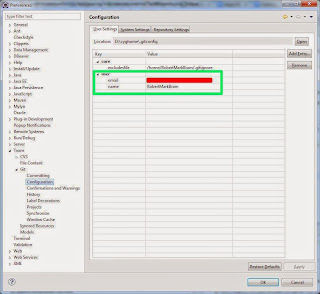

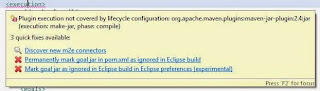

Plugin execution not covered by lifecycle configuration

- Get link

- X

- Other Apps

Flip a coin and get the same results 100 times in a row

- Get link

- X

- Other Apps

Regex to replace upper case with lower case in UltraEdit

- Get link

- X

- Other Apps

Listary, Directory Opus and AutoHotkey - a match made in Geek Heaven

- Get link

- X

- Other Apps